« An engine for the imagination »: an interview with David Holz, CEO of AI image generator Midjourney

AI-generated artwork is quietly beginning to reshape culture. Over the last few years, the ability of machine learning systems to generate imagery from text prompts has increased dramatically in quality, accuracy, and expression. Now, these tools are moving out of research labs and into the hands of everyday users, where theyre creating new visual languages of expression and most likely new types of trouble.

There are only thought to be a few dozen top-flight image-generating AI in existence right now. Theyre tricky and expensive to create, requiring access to millions of images used to train the system (it looks for patterns in the pictures and copies them) and a great deal of computational grunt (for which costs vary, but a million-dollar price tag isnt out of the question).

Right now, the output of these systems is mostly treated as novelty when it gets splashed on a magazine cover or used to generate memes. But as we speak, artists and designers are integrating this software into their workflow, and in a short amount of time, AI-generated and AI-augmented art will be everywhere. Questions about copyright (who owns the image? Who made it?) and about potential dangers (like biased output or AI-generated misinformation) will have to be dealt with quickly.

As the technology goes mainstream, though, one company will be able to take some credit for its ascendancy: a 10-person research lab named Midjourney, which makes an eponymous AI image generator accessed through a Discord chat server. Although the name might be unfamiliar, youve probably seen the output from Midjourneys system floating about your social media feeds already. To generate your own, you just join Midjourneys Discord, type a prompt, and the system makes an image for you.

A lot of people ask us, why dont you just make an iOS app that makes you a picture? Midjourneys founder, David Holz, told The Verge in an interview. But people want to make things together, and if you do that on iOS, you have to make your own social network. And thats pretty hard. So if you want your own social experience, Discord is really great.

Sign up for a free account, and you get 25 credits, with all images generated in public chatrooms. After that, youll have to pay either $10 or $30 a month, depending on the number of images you want to make and whether or not theyre private to you.

This week, though, Midjourney is expanding access to its model, allowing anyone to create their own Discord server with their own AI image generator. Were going from a Midjourney universe to a Midjourney multiverse, as Holz puts it. And he thinks the results will be incredible: an outpouring of AI-augmented creativity thats still only the tip of the iceberg.

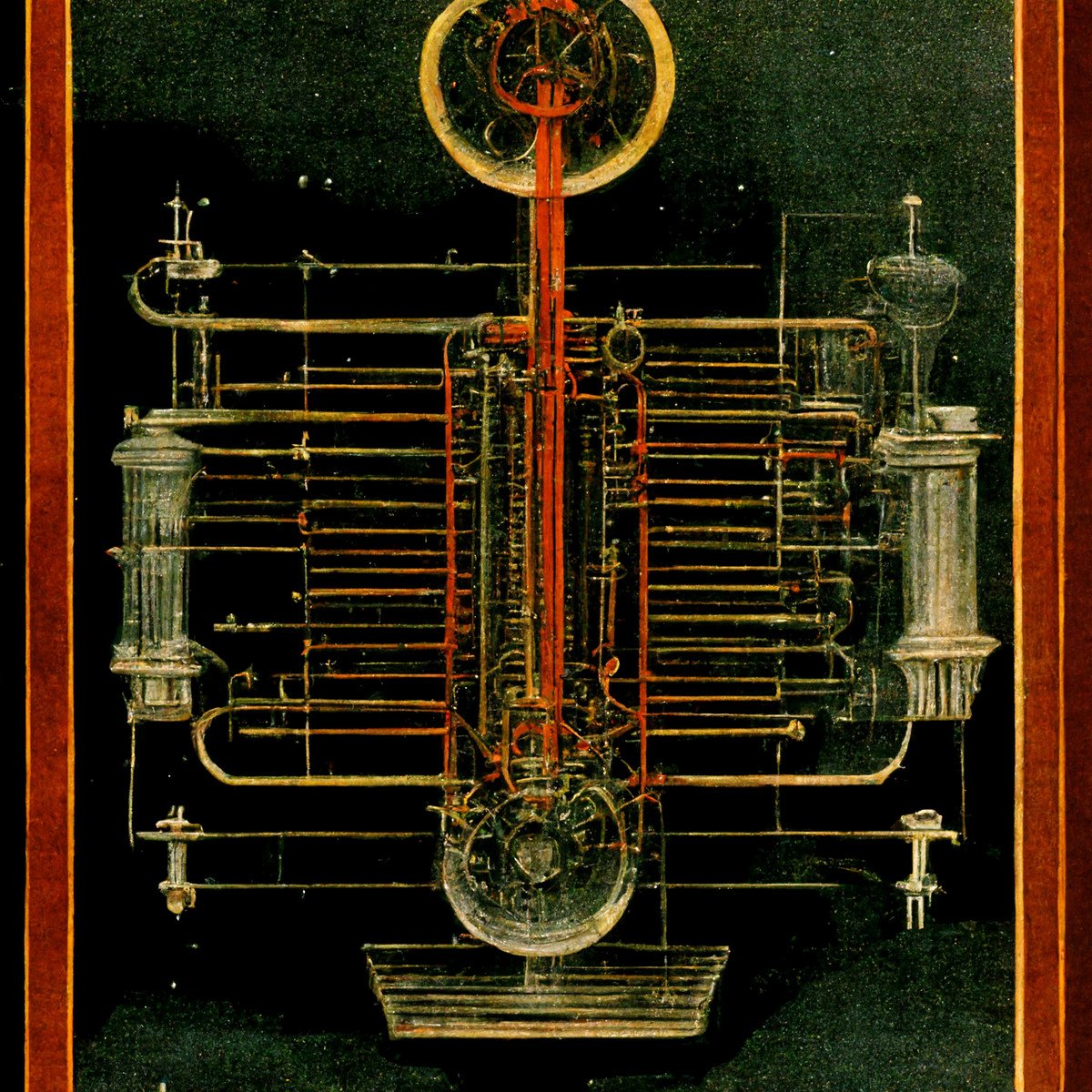

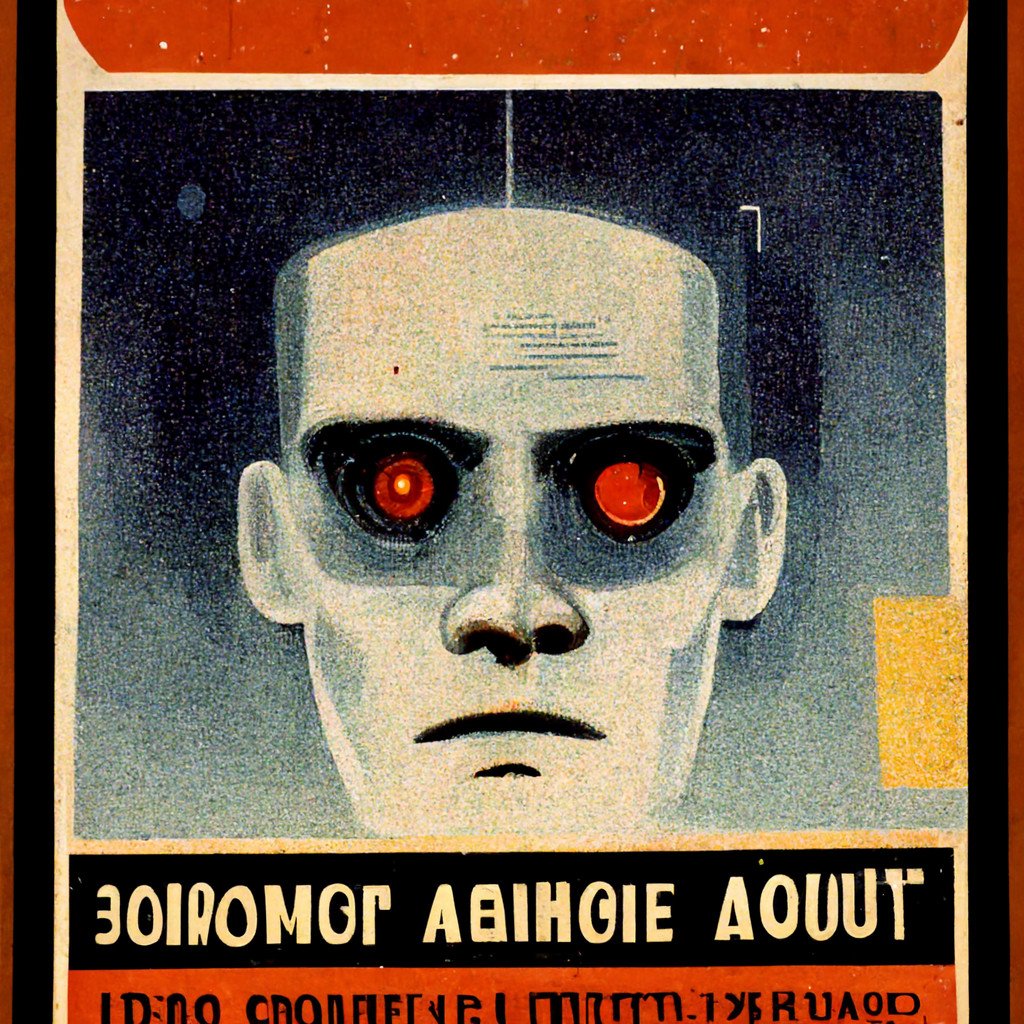

To find out more about Holzs ambitions with Midjourney about why hes building an engine for the imagination and why he thinks AI is more like water than a tiger we rang him up for an interview. And, of course, we got Midjourney to illustrate our conversation.

This interview below has been condensed and lightly edited for clarity.

Itd be great to start with a bit about yourself and Midjourney. Whats your background? How did you get in this scene? And what is Midjourney a company, a community? How would you describe it?

So, my name is David Holz, and I guess Im a serial entrepreneur. My brief history would be: I had a design business in high school. I went to college for physics in maths. I was working on a PhD in fluid mechanics while working at NASA and Max Planck. I got overwhelmed at one point and put all those things aside. So I moved to San Francisco and started a technology company called Leap Motion around 2011. And we sold these hardware devices that would do motion capture on your hands, kind of inventing a lot of the gestural interface space.

I founded Leap Motion and ran that for 12 years, [but] eventually, I was looking for a different environment instead of a big venture-backed company, and I left to start Midjourney. Right now, its pretty small were like 10 people, we have no investors, and were not really financially motivated. Were not under pressure to sell something or be a public company. Its just about having a home for the next 10 years to work on cool projects that matter hopefully not just to me but to the world and to have fun.

Were working on a lot of different projects. Its going to be a wide and diverse research lab. But there are themes: things like reflection, imagination, and coordination. And what were starting to become well known for is this image creation stuff. And we dont think its really about art or making deepfakes, but how do we expand the imaginative powers of the human species? And what does that mean? What does it mean when computers are better at visual imagination than 99 percent of humans? That doesnt mean we will stop imagining. Cars are faster than humans, but that doesnt mean we stopped walking. When were moving huge amounts of stuff over huge distances, we need engines, whether thats airplanes or boats or cars. And we see this technology as an engine for the imagination. So its a very positive and humanistic thing.

Lots of labs and companies are working on similar technologies that turn text into imagery. Google has Imagen, OpenAI has DALL-E, and there are a handful of smaller projects like Craiyon. Where did this tech come from, where do you see it going in the future, and how does Midjourneys vision differ from others in this space?

So, there have been two breakthroughs [in AI that led to image generation tools]. One is understanding language, and the other is the ability to create images. And when you combine those things, you can create images through the understanding of language. We saw those technologies coming up, and we saw the trends that these will be better at making images than people and itll be really fast. Within the next year or two, youll be able to make content in real time: 30 frames a second, high resolution. Itll be expensive, but itll be possible. Then, in 10 years, youll be able to buy an Xbox with a giant AI processor, and all the games are dreams.

From a raw technology standpoint, those are just kind of facts, and theres no way to get around that. But from a human standpoint, what the hell does that mean? All the games are dreams, and everything is malleable, and were going to have AR headsets what the hell does that mean? So the humanistic element of that is kind of unfathomable. And the software required to actually make that a thing that we can wield, its completely off the map, and I think thats our focus.

We started off testing the raw technology in September last year, and we were immediately finding really different things. We found very quickly that most people dont know what they want. You say: Heres a machine you can imagine anything with it what do you want? And they go: dog. And you go really? and they go pink dog. So you give them a picture of a dog, and they go okay and then go do something else.

Whereas if you put them in a group, theyll go dog and someone else will go space dog and someone else will go Aztec space dog, and then all of a sudden, people understand the possibilities, and youre creating this augmented imagination an environment where people can learn and play with this new capacity. So we found that people really like imagining together, and so we made [Midjourney] social. And we have this giant Discord community, like its one of the largest Discords, with roughly a million people where theyre co-imagining things in these shared spaces.

Do you see this human collective as parallel to the machine collective? As a sort of counterbalance to these AI systems?

Well, there isnt really a machine collective. Every time you ask the AI to make a picture, it doesnt really remember or know anything else its ever made. It has no will, it has no goals, it has no intention, no storytelling ability. All the ego and will and stories thats us. Its just like an engine. An engine has nowhere to go, but people have places to go. Its kind of like a hive mind of people, super-powered with technology.

Inside the community, you have a million people making images, and theyre all riffing off each other, and by default, everybody can see everybody elses images. You have to pay extra to pull out the community and usually, if you do that, it means youre some type of commercial user. So everyones ripping off each other, and theres all these new aesthetics. Its almost like aesthetic accelerationism. And theyre all bubbling up and swirling round, and theyre not AI aesthetics. Theyre new, interesting, human aesthetics that I think will spill out into the world.

Does this openness help keep things safe as well? Because theres a lot of discussion about AI image generators being used to generate potentially harmful stuff, whether thats straightforwardly nasty imagery gore and violence or misinformation. How do you stop that from happening?

Yeah, so, its amazing. When you put someones name on all the pictures they make, theyre much more regimented in how they use it. That helps a lot.

That said, weve still had some issues at times where, unfortunately, like, the way that social media works everywhere else, you can make a living by causing outrage, and theres a motivation for some people to come into the community, pay for privacy, then spend a month trying to create the most outrageous and horrifying shock imagery possible, and then try to publish it on Twitter. Then we have to put our foot on that and say, Thats not what were about; thats not the type of community we want.

Whenever we see that, we stomp it out. We ban words if we have to. Weve collected words for things like photorealistic ultragore, and weve banned every word within a mile of that.

What about realistic faces because thats another vector for creating misinformation. Does the model generate realistic faces?

It will generate celebrity faces and stuff like that. But we dont generally we have a default style and look, and its artistic and beautiful, and its hard to push [the model] away from that, meaning you cant really force it to make a deepfake right now. Maybe if you spend 100 hours trying, you can find some right combination of words that makes it look really realistic, but you have to really work hard to make it look like a photo. And personally, I dont think the world needs more deepfakes, but it does need more beautiful things, so were focused toward making everything beautiful and artistic looking.

Where did you get the training data from the model from?

Our training data is pretty much from the same place as everybody elses which is pretty much the internet. Pretty much every big AI model just pulls off all the data it can, all the text it can, all the images it can. Scientifically speaking, were at an early point in the space, where everyone grabs everything they can, they dump it in a huge file, and they kind of set it on fire to train some huge thing, and no one really knows yet what data in the pile actually matters.

So, for example, our most recent update made everything look much, much better, and you might think we did that by throwing in a lot of paintings [into the training data]. But we didnt; we just used the user data based off what people liked making [with the model]. There was no human art put into it. But scientifically speaking, were very, very early. The entire space has maybe only trained two dozen models like this. So its experimental science.

How much did it cost to train yours?

I would say, training models in this space, I cant speak about our specific costs, but I can say general things. Training image models is probably around $50,000 every time you do it right now. And you never get it right in one try, so you have to use three tries or 10 tries or 20 tries and you do need a lot so it adds up. It is expensive. Its more than what most universities could spend, but its not so expensive that you need a billion dollars or a supercomputer.

The costs will, Im sure, come down for both training and running. But the cost to run it is actually quite high. Every image costs money. Every image is generated on a $20,000 server, and we have to rent those servers by the minute. I think theres never been a service for consumers where theyre using thousands of trillions of operations in the course of 15 minutes without thinking about it. Probably by a factor of 10, Id say its more compute than anything your average consumer has touched. Its actually kind of crazy.

Speaking of training data, one contentious aspect here is the issue of ownership. Current US law says you cant copyright AI-generated art, but we dont quite know whether people can assert copyright over images used in training data. Artists and designers work hard to develop a particular style, but what happens if their work can now be copied by AI bots? Have you had many discussions about this?

We do have a lot of artists in the community, and Id say theyre universally positive about the tool, and they think its gonna make them much more productive and improve their lives a lot. And we are constantly talking to them and asking, Are you okay? Do you feel good about this? We also do these office hours where Ill sit on voice for four hours with like 1,000 people and just answer questions.

A lot of the famous artists who use the platform, theyre all saying the same thing, and its really interesting. They say, I feel like Midjourney is an art student, and it has its own style, and when you invoke my name to create an image, its like asking an art student to make something inspired by my art. And generally, as an artist, I want people to be inspired by the things that I make.

But theres surely a huge self-selection bias at work there because the artists who are active in the Midjourney Discord are bound to be the ones who will be excited by it. What about the people who say, Its bullshit; I dont want my art to be eaten up by these huge machines. Would you allow these people to remove themselves from your system?

We dont have a process for that yet, but were open to it. So far, I would say it doesnt have that many artists in it. Its not that deep of a dataset. And the ones who have made it in have been giving us like we dont really feel intimidated by this answers. Right now, its so new; I think it makes sense to play it by ear and be dynamic. So were constantly talking to people. And actually, the number one request we get right now from artists is they want it to be better at stealing their styles, so they can use it as part of their art flow even better. And thats been surprising to me.

It might be different for other [AI image] generators because they try to make something look like the exact thing. But we have more of a default style, so it really does look like an art student being inspired by something else. And the reason we do that is because you always have defaults, so if you say dog, we could give you a photo of a dog, but thats boring. From a human standpoint, why would you want that? Just go to Google image search. So we try to make things look artistic.

Thats something youve mentioned a few times in our conversation the default art style of Midjourney and Im really fascinated by this idea that each AI image generator is its own microcosm of culture, with its own preferences and expressions. How would you describe Midjourneys particular style, and how have you consciously developed it?

[Laughing] Its a little ad hoc! We try lots of things, and every time we try a new thing, we render out a thousand images. And theres not really an intention to it. It should look generally beautiful. It should respond to specific things and vague things. We definitely want it to not look like photos. We might make a realistic version at one point, but we wouldnt want it to be the default. Perfect photos make me a little uncomfortable right now, though I could see legitimate reasons why you might want something more realistic.

I think the style would be a bit whimsical and abstract and weird, and it tends to blend things in ways you might not ask, in ways that are surprising and beautiful. It tends to use a lot of blues and oranges. It has some favorite colors and some favorite faces. If you give it a really vague instruction, it has to go to its favorites. So, we dont know why it happens, but theres a particular womans face it likes to draw we dont know where it comes from, from one of our 12 training datasets but people just call it Miss Journey. And theres one dudes face, which is kind of square and imposing, and he also shows up some time, but he doesnt have a name yet. But its like an artist who has their own faces and colors.

Speaking of these sorts of defaults, one big challenge within the image-generation space is dealing with bias. Theres research that shows that if you ask an AI image model to draw a CEO, the CEO is always a white man, and when you ask it to output a nurse, the nurse is always a woman and often a person of color. How have you dealt with that challenge? Is it a big problem for Midjourney or of more concern for corporate companies who want to monetize these systems?

Well, Miss Journey is definitely more of a problem than a feature, and were working on something now that will try to break up the faces and give you more variety. But there are downsides of that, too. Like, we had a version where it just completely destroyed Miss Journey, but if you really wanted, say, Arnold Schwarzenegger as Danny DeVito, then it would completely destroy that request [too]. And the tricky thing is getting that to work without wiping out whole genres of expression. Because its really easy to have a switch that bumps up diversity, but its difficult to have it only turn on when it should.

What I can say is that its never been easier to make an image with whatever diversity you want you just use the word. Youre always one word away from creating, you know like, I was playing around with African cyberpunk wizards, and it looks beautiful, and its fucking cool, and all I needed was like one word to tell the model what you want.

So, just to pull back a bit, youve talked a lot about how you dont see the work youre doing in Midjourney as, shall we say, practical. I mean, its obviously very hands-on, but your motivation is more abstract about the relationship between humans and AI; about how we can use AI in this humanistic way, as you put it. Some people in the AI space tend to think about this technology in the grandest possible terms; they compare it to gods, to sentient life. How do you feel about this?

For a while, Ive been trying to figure out what is [Midjourneys AI image generator]? Because you can say its like an engine for imagination, but theres something else, too. The first temptation is to look at it through an art lens. To ask: is this like the invention of photography? Because when photograph was invented, paintings got weirder because anybody could take a photo of a face, so why would I paint that picture now?

And is it like that? No, its not like that. Its definitely weirder. Right now, it feels like the invention of an engine: like, youre making like a bunch of images every minute, and youre churning along a road of imagination, and it feels good. But if you take one more step into the future, where instead of making four images at a time, youre making 1,000 or 10,000, its different. And one day, I did that: I made 40,000 pictures in a few minutes, and all of a sudden, I had this huge breadth of nature in front of me all these different creatures and environments and it took me four hours just to get through it all, and in that process, I felt like I was drowning. I felt like I was a tiny child, looking into the deep end of a pool, like, knowing I couldnt swim and having this sense of the depth of the water. And all of sudden, [Midjourney] didnt feel like an engine but like a torrent of water. And it took me a few weeks to process, and I thought about it and thought about it, and I realized that you know what? this is actually water.

Right now, people totally misunderstand what AI is. They see it as a tiger. A tiger is dangerous. It might eat me. Its an adversary. And theres danger in water, too you can drown in it but the danger of a flowing river of water is very different to the danger of a tiger. Water is dangerous, yes, but you can also swim in it, you can make boats, you can dam it and make electricity. Water is dangerous, but its also a driver of civilization, and we are better off as humans who know how to live with and work with water. Its an opportunity. It has no will, it has no spite, and yes, you can drown in it, but that doesnt mean we should ban water. And when you discover a new source of water, its a really good thing.

And Midjourney is a new source of water?

[Laughing] Yeah, thats a little scary when you say it that way.

I think we, collectively as a species, have discovered a new source of water, and what Midjourney is trying to figure out is, okay, how do we use this for people? How do we teach people to swim? How do we make boats? How do we dam it up? How do we go from people who are scared of drowning to kids in the future who are surfing the wave? Were making surfboards rather than making water. And I think theres something profound about that.